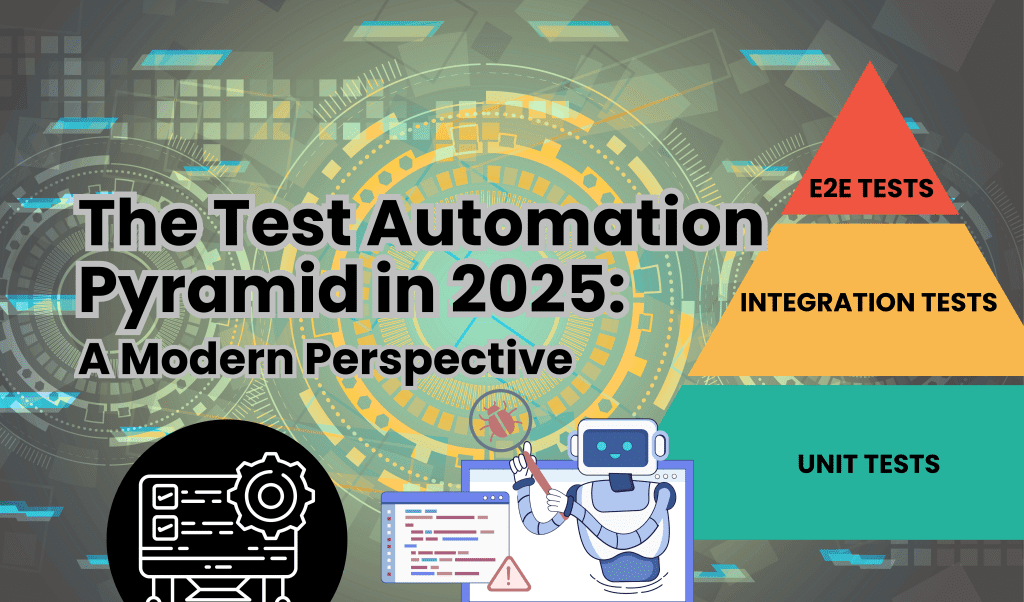

The Test Automation Pyramid in 2025: A Modern Perspective

Introduction The test automation pyramid has long been a cornerstone for structuring software quality assurance. Mike Cohn’s original model advocated a broad base of unit tests, a middle layer of integration/service/API tests, and a slim top of end-to-end UI tests. Agile and DevOps teams have relied on this strategy to balance speed, coverage, and reliability. Fast forward to 2025: the pyramid continues to evolve, shaped by rising microservices adoption, cloud-native architectures, and AI-driven automation-requiring teams to rethink and modernize their approach to scalable quality. Revisiting the Foundation: Unit Tests The broad foundation of the pyramid-unit tests-remains as vital as ever. These tests validate small, isolated units of code and run in milliseconds, contributing to fast feedback during CI/CD pipelines. In modern architectures, unit tests anchor the pyramid’s efficiency. Well-designed unit tests quickly pinpoint problems, facilitate refactoring, and allow continuous deployment with confidence. By expanding coverage at the base, teams prevent defects from cascading into higher layers, slashing bug-fix costs and timelines by 30-50%. Modern static analysis and AI-powered test generation-such as Mohs10 Technologies’ GenAI-driven QE platform-further fortify unit test strategies, proactively catching issues at code inception. Mohs10 automates unit test creation for microservices, leveraging mutation testing to achieve 95% code coverage in under 10 seconds, enabling teams to refactor with zero downtime. Test data factories, mocking libraries, and mutation testing tools are now standard additions, reinforcing the pyramid’s rock-solid bottom. The Middle Layer: Integration and Service/API Testing In monolithic systems, integration tests often validated a handful of system components. Microservices and distributed cloud apps have shifted this landscape dramatically. In 2025, service/API tests validate interactions between dozens (sometimes hundreds) of microservices, databases, and third-party APIs, making this layer critical to scalable quality. Modern integration testing leverages contract-driven approaches, service virtualization, and real-time message simulations. API contract testing tools (like Pact and Postman), container orchestration, and chaos engineering tests help teams model real-world interactions and fault tolerance. This middle layer balances speed and coverage, efficiently surfacing business logic issues before touching the UI. The biggest challenge? Maintaining reliable, fast-running tests amid asynchronous service calls and dynamic scaling. Leading teams overcome this with parallel test execution, cloud-native environments, and synthetic data management-strategies that preserve pipeline velocity while maximizing coverage. Mohs10 Technologies addresses this head-on with contract testing via Pact integrated into Kubernetes-orchestrated environments, simulating real-time healthcare API calls (e.g., EHR integrations) to guarantee 99.9% uptime and zero-downtime deployments. The Top Layer: Targeted End-to-End UI Testing End-to-end tests simulate real user journeys, validating that the entire system behaves correctly across integrated workflows. However, they are the slowest, most brittle, and most expensive to maintain. In 2025, top-performing teams limit E2E tests to critical business scenarios: checkout flows, login, registration, and vital integrations. Modern best practices include: Selective E2E coverage: Only the most essential user journeys. Stable environments: Cloud-based setups mirroring real production (but controlled). AI-driven test healing: Auto-fix locators and remove flakiness. Visual testing: Pixel-precise checks to catch UI regressions. A well-balanced pyramid avoids over-investment in E2E, keeping the top thin and sustainable. How the Pyramid Adapts to Modern Architectures Microservices, containers, and cloud-native systems have stretched the classic pyramid model: Service Meshes: Require mesh-aware testing for routing, security, and latency. Event-Driven Architectures: Demand asynchronous test strategies. Chaos Engineering: Injects failures to ensure graceful degradation. Infrastructure as Code: Test automation for cloud resources, policies, and configurations. The modern pyramid is more flexible: sometimes the “skyscraper” model or diamond-shaped structures surface, accommodating complex service-to-service tests. Practical Implementation Tips Continuous Testing: Automate everything-unit, API, and UI tests-in CI/CD. Test Data Management: Invest in synthetic data generation and automation for consistency. Shift-Left QA: Move more tests early into the pipeline, integrating with source control and build triggers. Test Observability: Use dashboards, logs, and analysis to pinpoint failures fast. Parallel Execution: Speed up feedback by running tests concurrently. DevSecOps Integration: Embed security tests at every layer for compliance. Partner with AI-QE Leaders: Team up with Mohs10 Technologies for custom pyramid audits and GenAI self-healing bots-gain exclusive insights and Amazon rewards. Teams succeeding with the pyramid leverage low-code platforms, self-healing scripts, and containerized test environments-empowering both technical and non-technical contributors.Common Challenges (and How Modern Teams Overcome Them) Challenge Solution Too many E2E/UI tests Shift coverage to unit/API layers, reduce brittle UI checks Slow feedback and long pipelines Parallelize, optimize, and remove redundant tests Flaky tests and inconsistent results Use AI-driven healing and better test data Limited microservice coverage Adopt contract tests, service virtualization, chaos testing Poor test documentation Use dashboards and test reporting with actionable insights Data management bottlenecks Automate synthetic test data, use fixtures and factories The Test Automation Pyramid in Agile and DevOps Agile and DevOps environments benefit most from the pyramid’s strategy-continuous monitoring, rapid feedback, and scalable automation. Designs that emphasize a thick base (unit & API/service tests) deliver stable releases and keep technical debt low. Agile squads refine their test suites iteratively, applying automation platforms and reporting tools that foster transparency and accountability. The Pyramid’s Future: 2025 and Beyond Emerging trends continue to reshape the landscape: AI-Driven Test Case Generation: Using machine learning to design and update test flows. Autonomous QA Bots: Self-healing and self-optimizing test suites Wireless & IoT Testing Integration: Expanding layers for device, protocol, and edge validation. Security-First QA: DevSecOps embeds security checks in every layer for compliance. Cloud Policy & Infrastructure Testing: Automated infrastructure as code (IaC) checks as part of the pyramid’s lower tiers. The automation pyramid isn’t a static artifact-it’s a living strategy refined by each new technology wave, ensuring quality, speed, and security are never compromised. Conclusion The test automation pyramid in 2025 remains the gold standard for scalable, reliable quality-provided it evolves with microservices, cloud-native systems, and AI-driven testing. Prioritize a rock-solid base of unit tests, fortify the middle with contract-driven API and integration testing, and keep end-to-end UI tests razor-thin and self-healing. Embrace DevSecOps, chaos engineering, and autonomous QA bots to stay ahead of complexity. Start auditing your pyramid today: shift coverage left, parallelize execution, and integrate synthetic data with AI test healing.